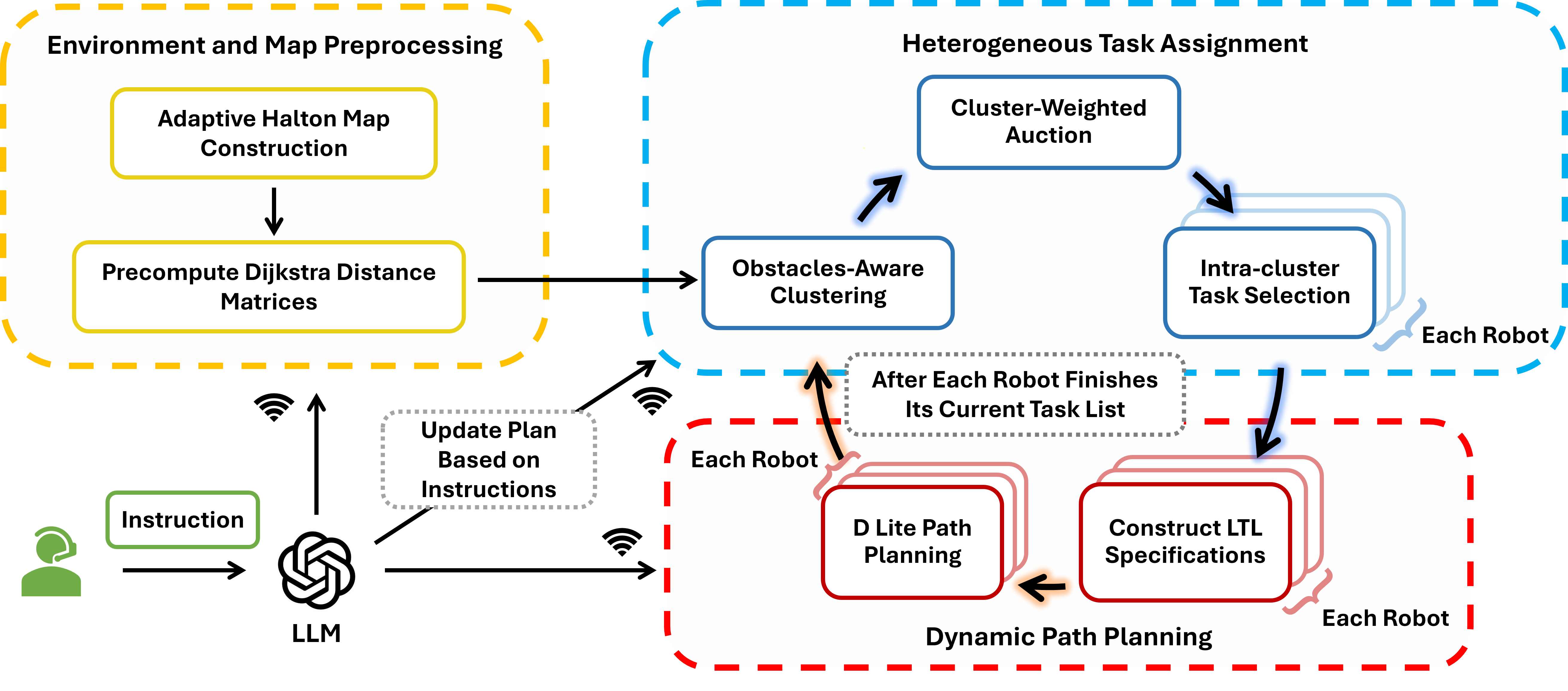

Framework Overview

OATH

Overview of OATH — a novel obstacle-aware multi-agent task assignment and planning framework.

Multi-Agent Task Assignment and Planning (MATP) has attracted growing attention but remains challenging in terms of scalability, spatial reasoning, and adaptability in obstacle-rich environments.

To address these challenges, we propose OATH (Adaptive Obstacle-Aware Task Assignment and Planning for Heterogeneous Robot Teaming). Our framework advances MATP by introducing two novel obstacle-aware strategies for task allocation. First, we develop an adaptive Halton sequence map—the first known application of Halton sampling with obstacle-aware adaptation in MATP—which adjusts sampling density based on obstacle distribution. Second, we integrate Dijkstra task-to-task distance matrices that encode traversability. Combined, these strategies significantly improve allocation quality in obstacle-rich environments. For task assignment, we propose a cluster–auction–selection framework that integrates obstacle-aware clustering with weighted auctions and intra-cluster task selection. These mechanisms jointly enable effective coordination among heterogeneous robots while maintaining scalability and near-optimal allocation performance. In addition, our framework leverages an LLM to interpret human instructions and directly guide the planner in real time.

We validate OATH in both NVIDIA Isaac Sim and real-world hardware experiments using TurtleBot platforms, demonstrating substantial improvements in task assignment quality, scalability, adaptability to dynamic changes, and overall execution performance compared to state-of-the-art MATP baselines.

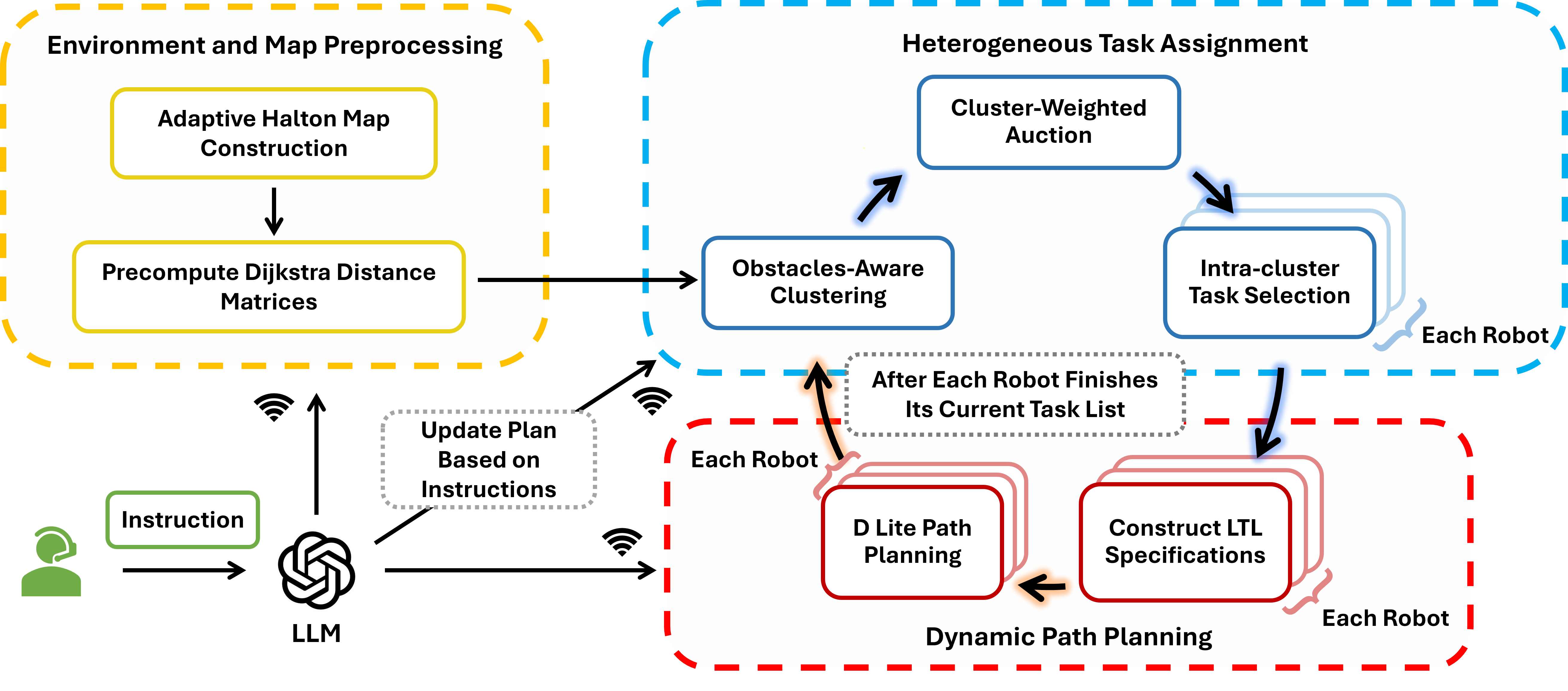

Comparison of heterogeneous task assignment results under different numbers of task types. Each subfigure illustrates the final task assignment for teams operating with 2, 3, or 5 task types, respectively.

Simulation with two ground robots and two drones in Isaac Sim, where the ground robots handle both red and blue tasks while the drones only handle blue tasks. The exclamation marks indicate the task locations.

Our framework also accounts for differences in kinematic and dynamic characteristics among heterogeneous robots, such as varying speeds.

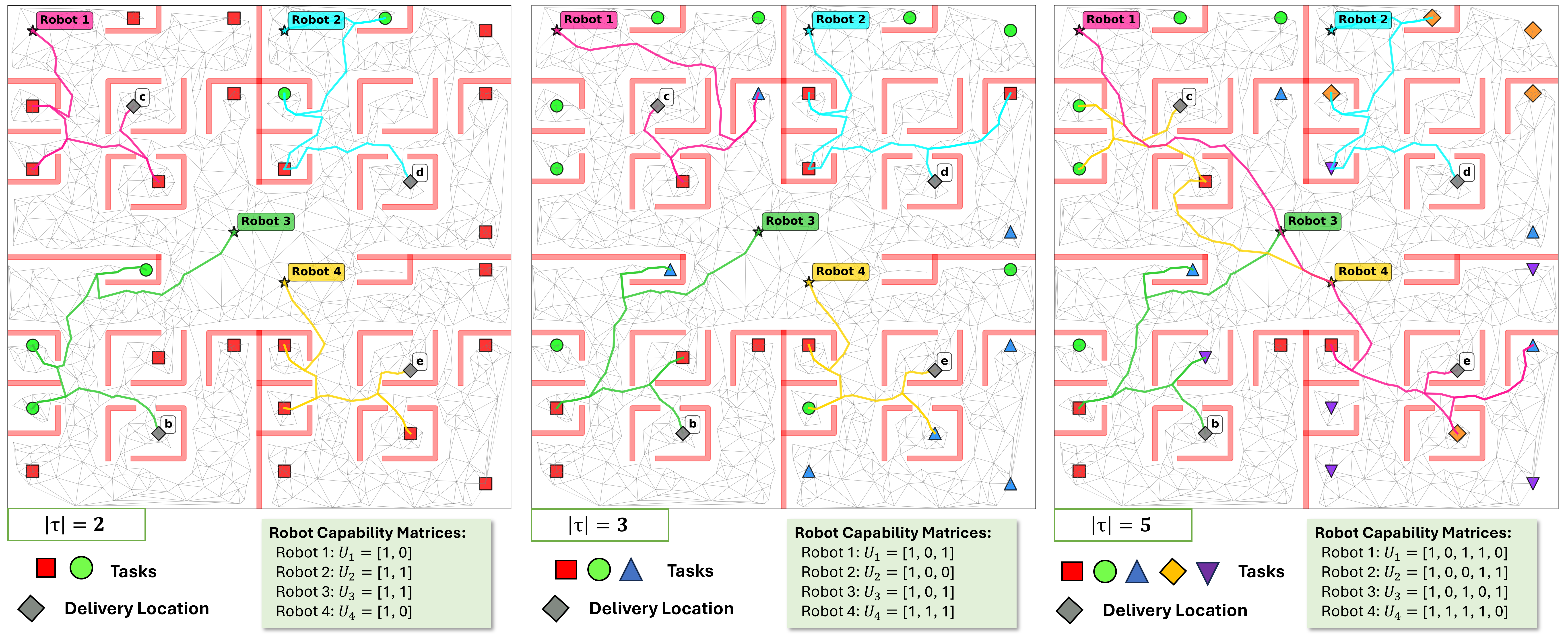

A fully integrated online pipeline with LLM-guided interaction for multi-robot systems. It interprets natural language inputs and supports real-time replanning in response to human instructions.

We validate the OATH framework on physical robot platforms to demonstrate real-world applicability. The following videos show hardware experiments with heterogeneous robot teams executing obstacle-aware task assignments.

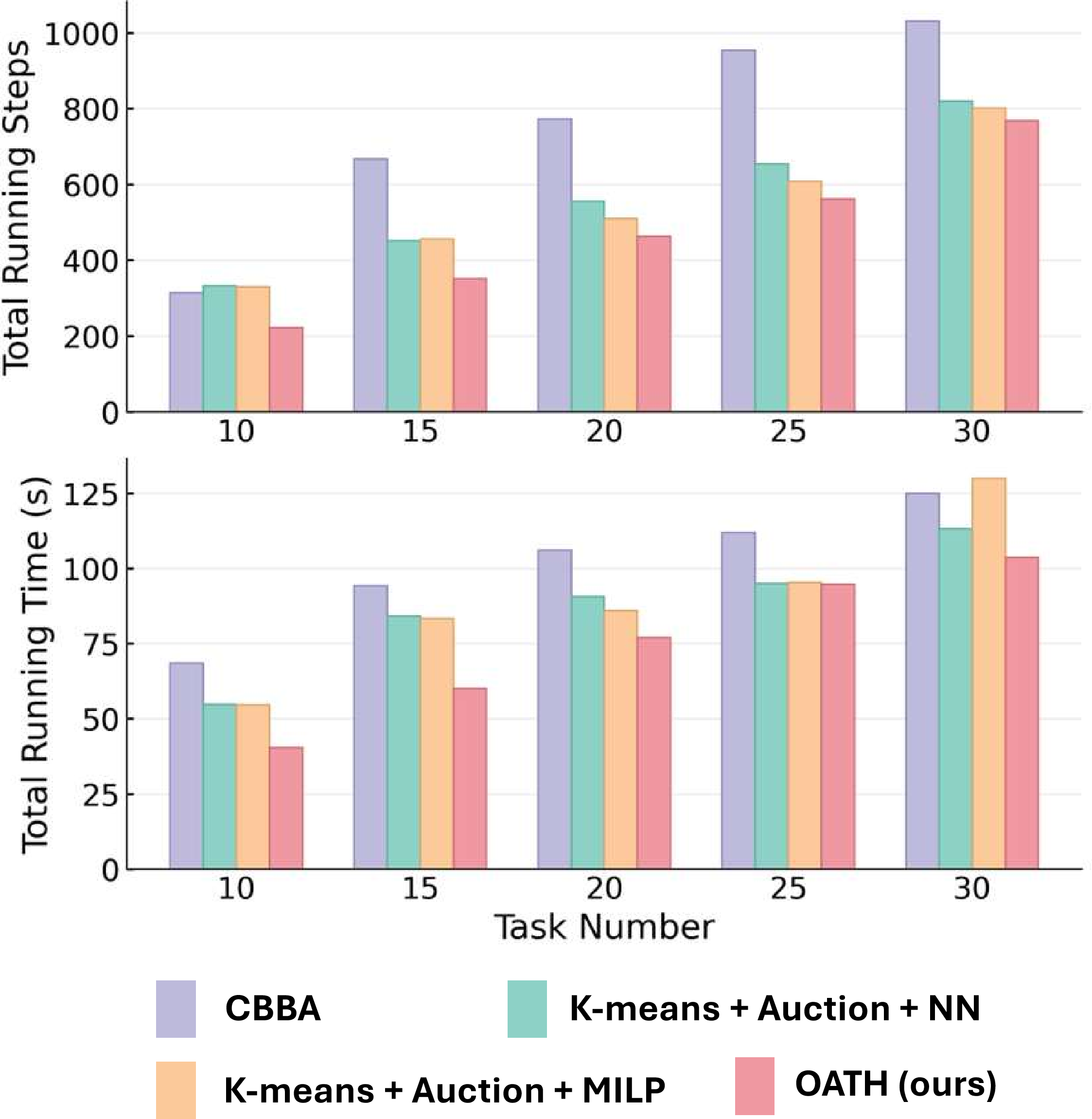

We compare OATH against three baselines: CBBA (consensus-based bundle algorithm), KAN (k-means clustering + auction + nearest-neighbor heuristic with Euclidean distances), and KAM (k-means clustering + auction + MILP-based intra-cluster solver with Dijkstra distances). All variants share the same path planning algorithm.

| Method | Clustering | Intra-Cluster |

|---|---|---|

| CBBA | Bundle | Bundle Order |

| KAN | k-means | NN |

| KAM | k-means | MILP |

| OATH (ours) | Obstacle-Aware Cluster | MILP |

| Total Steps | ||||

|---|---|---|---|---|

| Tasks | CBBA | KAN | KAM | OATH |

| 10 | 314 | 332 | 330 | 222 |

| 15 | 667 | 451 | 456 | 351 |

| 20 | 773 | 555 | 510 | 463 |

| 25 | 954 | 654 | 608 | 562 |

| 30 | 1031 | 820 | 802 | 768 |

| Total Running Time (s) | ||||

| Tasks | CBBA | KAN | KAM | OATH |

| 10 | 68.53 | 54.79 | 54.60 | 40.36 |

| 15 | 94.20 | 84.13 | 83.30 | 60.11 |

| 20 | 106.02 | 90.66 | 85.96 | 76.98 |

| 25 | 111.96 | 95.02 | 95.35 | 94.76 |

| 30 | 124.94 | 113.14 | 129.95 | 103.63 |

| Tasks | Total Distance | Time (s) | ||||

|---|---|---|---|---|---|---|

| OATH | MILP | Gap to MILP (%) | Gap to LB (%) | OATH | MILP | |

| 9 | 88.108 | 67.043 | 23.91 | 0.00 | 0.0716 | 3.96 |

| 12 | 102.446 | 85.381 | 16.66 | 0.00 | 0.0837 | 32.94 |

| 15 | 125.051 | 108.226 | 13.45 | 6.07 | 0.2011 | ≥600 |

| 18 | 146.529 | 133.006 | 9.23 | 16.69 | 0.3237 | ≥600 |

| 21 | 208.357 | 155.572 | 25.33 | 22.12 | 0.3663 | ≥600 |

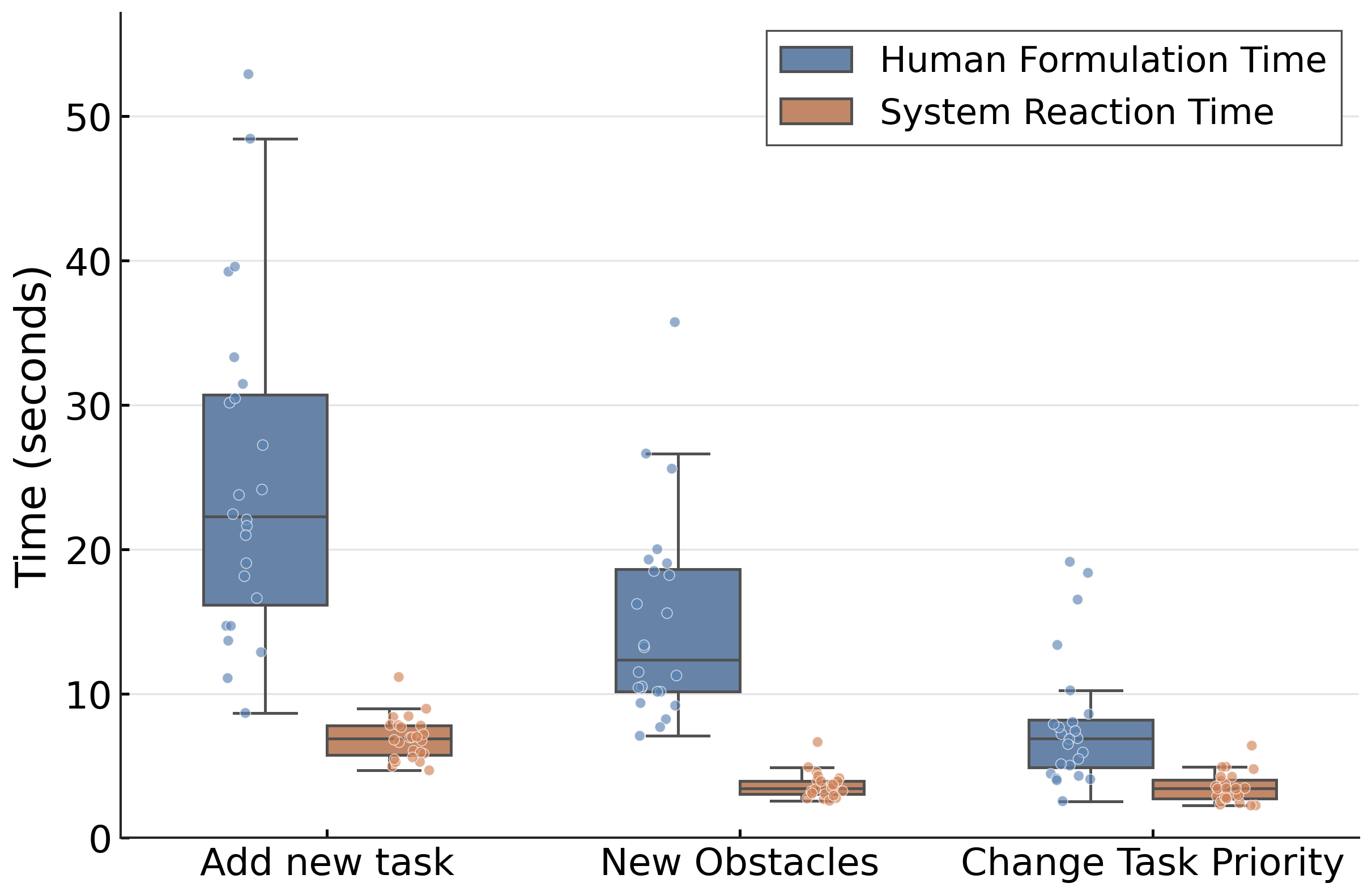

In addition to evaluating intent parsing accuracy and system performance impact, we conduct a user study to quantify human-in-the-loop efficiency and analyze the latency of the LLM-guided pipeline.

We recruit eight participants to evaluate the efficiency of human interventions. Each participant performs three trials for each intervention intention, resulting in 24 trials per intention. Participants are allowed to freely design their own instructions in each trial—they may introduce new tasks or new obstacles at arbitrary locations in the environment, without being restricted to predefined templates or fixed coordinates.

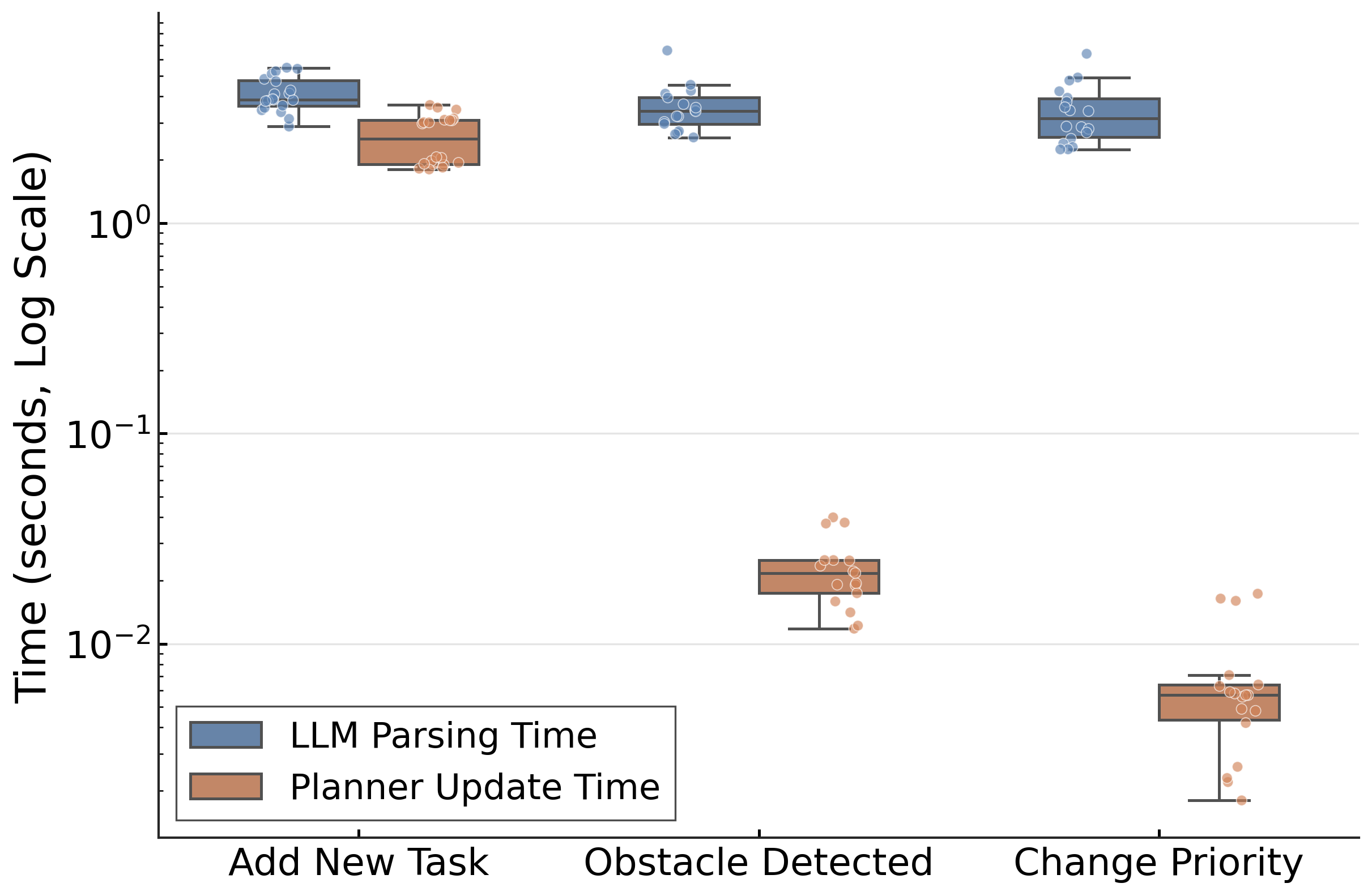

To evaluate the efficiency and latency characteristics of the LLM-guided pipeline, we conduct two complementary experiments. The first experiment examines the overall human-in-the-loop interaction by measuring human formulation time and total system reaction time. The second experiment further decomposes the system reaction time into LLM inference time and planner update time, allowing us to analyze the source of latency under different intervention types.

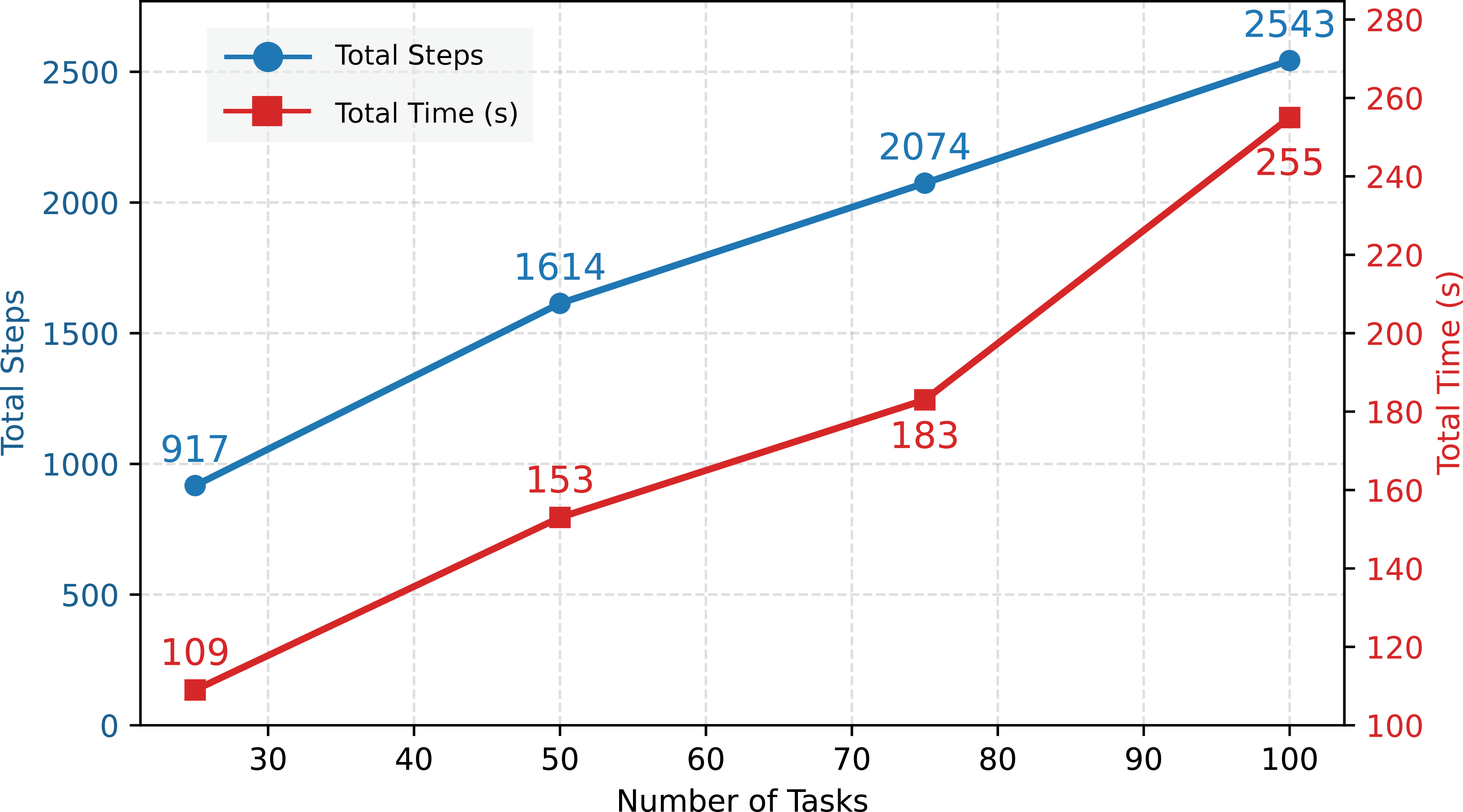

OATH introduces an adaptive Halton sequence that dynamically adjusts sampling density based on obstacle distribution. In addition, the proposed hierarchical cluster–auction–task selection scheme generalizes to any number of task types and reduces allocation complexity while respecting robot capacity and capability constraints. Together, these components enable scalable and near-optimal task assignment for heterogeneous robot teams operating in obstacle-rich environments.

Beyond task assignment, OATH integrates LLMs as persistent interpreters throughout the execution phase. Unlike prior approaches that employ LLMs only for initialization, our framework continuously leverages LLMs to translate natural language instructions into structured constraints and task updates. This design ensures ongoing adaptability to dynamic human intent, unforeseen obstacles, and mission changes.